Projecting into the Future

In my recent posting on estimation precision, I briefly mentioned how you can estimate when certain features will be done, or estimate what features will be done by a given date, using a burn-up chart. Some people don’t find this an obvious and easy thing to do. Let me explain in more detail.

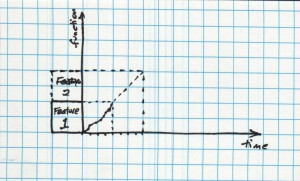

I’ll start with the assumption that you have a list of features you want to add to an application. Some of the things you want to add may not be properly called features–they might be bug fixes, for example. And the application might be brand-new, with zero functionality so far. That’s OK, we can handle cases like that. Let’s walk through how I do this… Here we see a burnup chart showing the development of the first feature of a project. This “Feature 1” took the team 4 iterations to complete.

Here we see a burnup chart showing the development of the first feature of a project. This “Feature 1” took the team 4 iterations to complete.

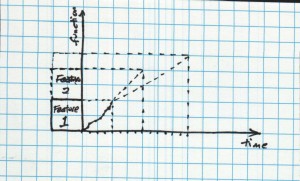

Now we want to develop “Feature 2.” Is it about the same size as Feature 1? Bigger? Smaller? It’s really hard to say, isn’t it?

We could head down the path of precision estimates, looking at the detail of the work to implement Feature 1 and the imagined detail of the work to implement Feature 2. We could measure the person-hours spent on Feature 1, and apply adjustments for sick day, holidays, planned hiring, and a myriad of other factors. I find that such an approach not only doesn’t help me answer the questions I have, it distracts me from them.

Instead, I like to estimate features in “T-shirt sizes.” A simple range of small, medium, large, and extra-large is “close enough.”

When we’re just starting out on a new project, we don’t have enough information to make wise choices. So let’s make quick ones. I’m going to call Feature 1 a “medium” feature. Given the lack of detail about Feature 2, let’s call it “medium” also.

So we project another increment of work, the same size, accomplished at the same rate. Surprise, surprise, surprise! We project it will take another 4 iterations. We didn’t need a burnup chart to tell us that.

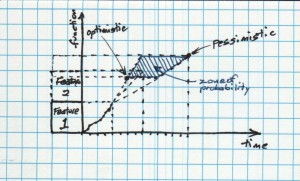

Pessimism

It’s also likely that we don’t really believe thi s projection. Sure, it’s the best information we have at the moment, given that we know so little, but it seems prudent to explore the “what ifs” a little. What if the feature is really 50% bigger? What if the team is slower this time around?

s projection. Sure, it’s the best information we have at the moment, given that we know so little, but it seems prudent to explore the “what ifs” a little. What if the feature is really 50% bigger? What if the team is slower this time around?

Yikes! Now we’re looking at 10 iterations as a pessimistic estimate.

Optimism and the Zone of Probability

What if the Feature 2 turns out to be smaller than Feature 1? And the team is more productive because they’ve got some momentum as a team?

What if the Feature 2 turns out to be smaller than Feature 1? And the team is more productive because they’ve got some momentum as a team?

Our most optimistic estimate suggests that we’ll only take 2 iterations to implement Feature 2. By extending both our feature size and our team velocity estimates, we see the bounds of the Zone of Probability.

A range of 2 iterations to 10 iterations is a pretty wide variation. We’ve got such a large variation because our uncertainties are large with regard to both the size of the feature and the capacity of the team. We have these large uncertainties because we’re extrapolating from a single data point–the time it took this team to produce Feature 1.

In time, we’ll have more data and can reduce the range of our uncertainty. (Or the data will show that we really are that uncertain in our delivery. Delivering at a more reliable pace is a topic for another day.) If you want more predictability, sooner, then work in smaller chunks. If the features are smaller, then the picture comes into focus much more quickly.

For now, I’ll leave it as an exercise for the reader

- how to handle Feature 3 and beyond

- how to handle features of different sizes

- how to sort a large stack of planned features into buckets for different sizes

- how to handle uncertainty in size when projecting further into the future

and other such issues. If you have any questions, don’t hesitate to ask.

George,

Are your teams getting paying-customer feedback more often than once every 4 weeks? For milestone, coarse-grained planning, we’ve stopped doing t-shirt size estimates. Instead we work on finding a scope that can fit inside a business-determined investment into a particular functionality. See more at http://dhondtsayitsagile.blogspot.com/2010/04/fan-out-release-planning.html